New Models (Including Free Options), All-New Citations, & API Enhancements - UIUC.chat Product Update #4

You’re receiving this email since you’ve signed up for UIUC.chat. We will only email you about product updates. This is our 4th email in our two-year existence, but we have over 1,900 git commits and 600 PRs across our frontend and backend. We hope this is ‘high alpha’ insight into the best uses of LLMs that we’re pushing into production. We are a small team of researchers and everything we build is free, enjoy!

Today we're launching

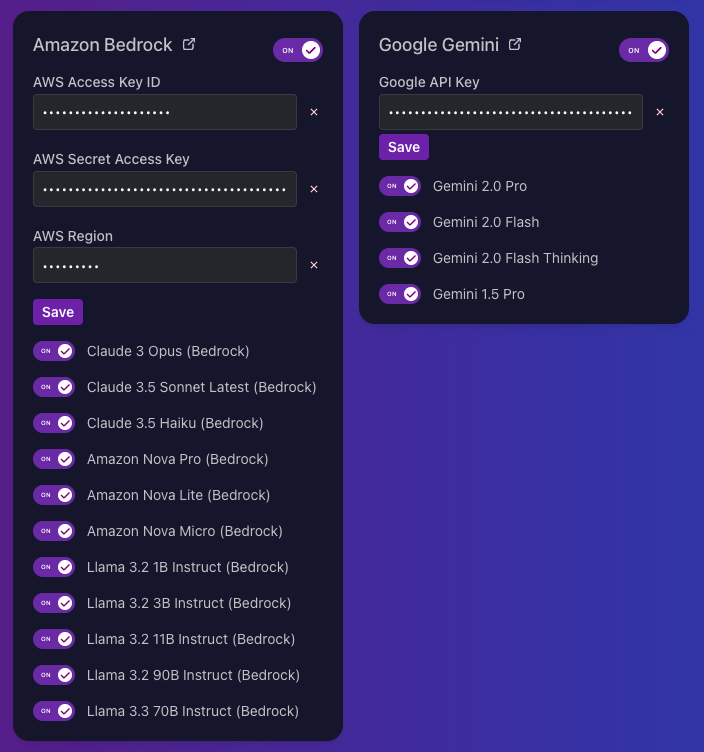

- New LLM Providers: Google Gemini & AWS Bedrock

- New models

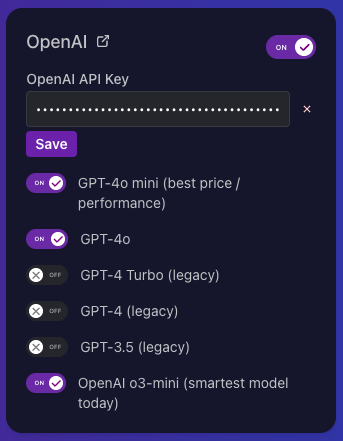

- OpenAI O3 mini 🧠 - just add your OpenAI or Azure API key.

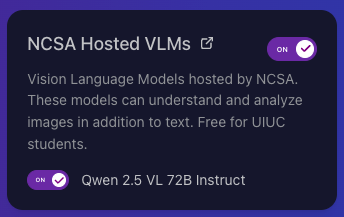

- Qwen 2.5 VL 72B - It is free and better than GPT 4o-mini in our experience. It's our new default & go-to all-around great model.

- All-new citation experience 📌

- Improved web crawling experience 🕸️

- API request builder - easier than ever to take full advantage of our API.

New LLM Providers: Amazon and Google

We now offer the full suite of Amazon Bedrock and Google Gemini models. As always, you have to bring your own API key. Or, use our free self-hosted models described below.

Tap into the SOTA models from Anthropic, Amazon and Meta, all under one roof. Like training on a mountain of data? Now, you’ve got Bedrock!

Gemini 2.0 Flash is free for 1 million Tokens Per Minute , but it's the only model we offer where the company will collect & train on your usage data. Gemini 2.0 Flash is worth a callout - it's new cheapest AI that's still reasonably high quality. Low cost opens up new use cases.

Now we support all the major LLM providers, Enjoy!

OpenAI O3 Mini 🧠

In short, it's world's smartest (publicly available) model, offered at relatively low cost ($1.10/million input, $4.40/million output). It's roughly on par with GPT-4o pricing, so it's worth using o3-mini instead of 4o.

Our new favorite: Qwen 2.5 72B Vision Language Model

We use this as the default for most of our work. We only go up to O3-mini for improved smarts & scientific knowledge, and down to Qwen 14b for massive dataset processing with reasonable tool-calling capabilities.

All new Citation Experience 📌

We couldn't wait to share this complete redesign of the citation experience. Several users found issues with the current citation generation so we did a huge revamp of the prompting as well as how we present sources. Now, inline sources will display the name of the source and a dedicated citation sidebar showing the cited sources at the top, and all the rest that were shared with AI under "More Sources".

Web scraping 🕸️

We now show Web Crawling progress in real time, so you can watch as your crawl discovers content.

API Request Builder

The new request builder makes using our API as easy as could be. Just enter your details and copy-paste into your terminal or code files.

Pro tip: if a project has zero documents, then our API is free access to these base models. If you're doing research, use our API to have free access to Llama, Qwen 2.5 VL 72b, and whatever LLMs come out next.

Reply to say hi

Thank you, and if you have any feedback or feature suggestions please just reply to this email and we'll build it for you.

And if you made it this far in the email, consider checking out our Patreon to support us starving students. Everything we build is free thanks to the University of Illinois and NCSA.

Member discussion